In the first three API Management posts I wrote, we discussed “What are APIs?(The Technical Perspective)”, “What is API Management?”, and “The Anatomy of an API Management Solution”. Continuing with this theme, we will explore the API Management Stack. So, what do I mean by API Management Stack?

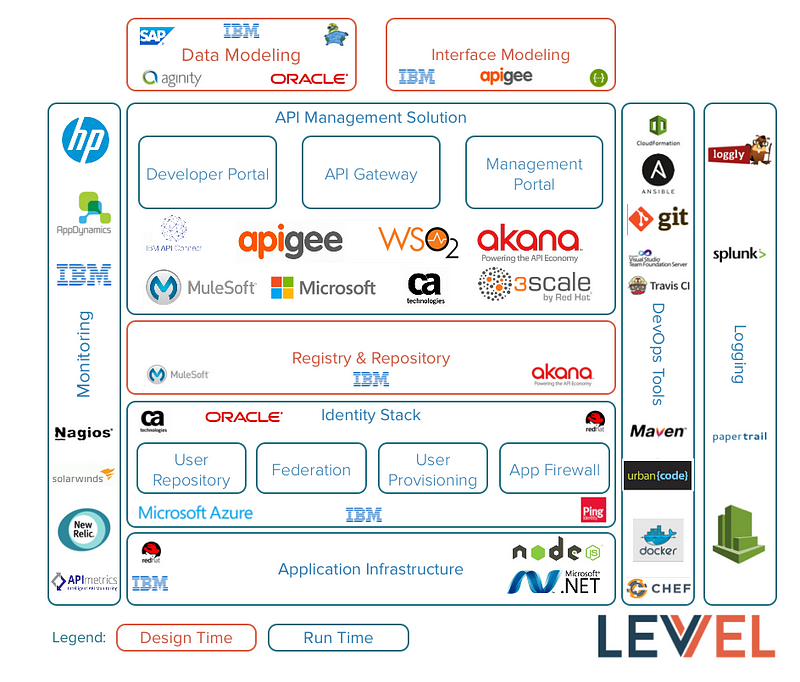

The API Management Stack is the API Management solution plus all the ancillary dependencies that are needed to deliver and manage a functioning API Management platform that can support 100s or 1000s of APIs covering a variety front-end facing, consumer friendly APIs, back-end integration patterns for the enterprise, and a bit in between. As always, I am focused on the enterprise, but this material should be relevant to many other organizations as well — smaller organizations probably won’t have as many APIs, admittedly. Recently, I was discussing API Management with an organization and the conversation went in this direction; they were starting from scratch, including with DevOps, and came to the conclusion that they didn’t just need an API Management Solution, but needed an API Management Stack. We put together the attached graph outlining the dependencies and the players in each space. In future blogs posts, we will dig into each one of these in more detail.

From our previous discussion, the API Management solution includes:

- API Gateway (Run-time Gateway)

- Developer Portal

- Management Portal

An API Management Solution has the following dependencies:

- DevOps Tooling

- Data Modeling

- Interface Modeling

- Registry and Repository

- Monitoring Platform

- Synthetic Transactions

- Log Server (think Splunk-like)

- IAM Stack (Identity Provider, LDAP, Federation Server, PDP/PEP)

- App Server

- Data Modeling

- Interface Modeling

- Registry and Repository

Each of these tools have a decision point around on-premise or cloud hosted.

DevOps Tooling

DevOps includes:

- continuous delivery

- automated testing

- automation

- artifact management / repositories

- self service

Version Control & Artifact Management: Artifactory, Git, TFS

App Build Server: Jenkins, TravisCI, Maven/Ant

App Deployment: uDeploy, DockerUCP, CodeDeploy

Infrastructure as Code: Docker, Chef, Ansible, CloudFormation

Thanks to James Denman for the contribution to this section.

Monitoring Platform

A comprehensive monitoring platform provides:

- networking monitoring

- host/IP pings

- TCP port checks

- SSL/TLS handshake checks

- Log Scraping (scanning)

- OS Monitoring

- process checks on OS instances

- system resource utilization (CPU, Memory, I/O — disk, I/O — network)

- synthetic transactions (test calls that simulate real traffic)

- transaction monitoring

- transaction response time monitoring.

- transaction volume monitoring

- error frequency monitoring

An advanced system may provide self-healing that will attempt to automatically fix the problem by restarting systems, processes, reapplying configuration from source control, or redeploying application code from a build server among other remediations. The monitoring platform must also provide a mechanism for viewing/searching collected data and providing historical data.

The monitoring platform is continuously collecting data on, ideally, every aspect of the system. An organization will usually want to receive some type of alert (email, pager, console, text message, etc) when certain predefined thresholds are reached. For example, if more than X errors have occurred in the last N seconds or if the average response time of XYZ API was greater than X milliseconds, then send an alert. The configuration for this functionality can become quite lengthy.

Options: HP NNMI, Nagios, Tivoli, SiteScope, AppDynamics, NewRelic

Log Server

Some place to write logs, errors, statistics, and anything important. I like solutions that support syslog. In particular, Syslog over TLS/TCP. For Apigee Edge Public Cloud, this is pretty much the only out-of-the-box option — you can always write something completely custom in one of the supported languages. Apigee Edge On Premise allows for writing to the local file system as well. WebSphere DataPower supports a wide variety of logging protocols (NFS, local file system, syslog over UDP, syslog over TCP, syslog-ng, others) — not all functionality is necessarily exposed in the API Management Console (you may have to get your hands dirty in the DataPower Web Console).

Options: Syslog server (comes with most Unix-like operating systems), Loggly, Splunk

IAM (Identity and Access Management) Stack

Next, we have the Identity Stack. There is a very good chance that any established organization moving into the API Management space already has a long history of IAM-related technologies. Furthermore, it is likely that such an organization owns the full-identity stack of one of the major security vendors (CA,Microsoft, Ping, IBM, many others). For an initial API Management project, the focus will be on integrating with the existing Identity Stack. There are several touch points to consider

- run-time gateway

- authentication step

- authorization step

- developer portal

- single sign-on to developer portal

- role definitions and validation

- integration with IAM stack for self-service activities(create application, delete application, generate client secret, API subscriptions, others depending on use cases).

- management portal

- single sign-on to management portal

- role definitions and validation

All of this falls into a broad category that I like to call security integration — a topic that can become quite involved. Each of the topics I listed above will be their own blog post in the future.

Security Stack Vendors:

- IBM

- Microsoft

- Red Hat

- Ping

- Oracle

- many others.

Options for Security Integration:

- out-of-the-box functionality in the API Management components (depends on vendor and use case)

- custom code in the various API Management components

- separate layer of custom code.

App Server

If you are using API Management, chances are you have developed APIs that need a place to live; it’s also possible that you’re cobbling together numerous third-party APIs, but for this conversation, let’s assume the aforementioned is true.

The choice on an API run-time environment (Application Server) depends on what the target language is. This is usually dictated by the preference of the team(s) making the decision, what skill sets exist in house, and the history of the organization.

Options: Red Hat JBoss EAP, Microsoft .NET, IBM WebSphere Application Server, node.js, many others

Data Modeling Tools

Ideally, a large organization has a well-developed data modeling process for data at rest (DDL) and data in motion (XSD and JSON Schema). The same tools would be used for both and there is a high-level abstraction language (UML, maybe others) utilized to describe data structures. The enterprise should have a library of data models that can be reused across projects and platforms to describe the common data elements that business-systems process. Theoretically, there is a data-modeling group that works closely with application teams and DBAs. If this is missing from your enterprise, investigate further.

Options: PowerDesigner, RSA, Toad, Aginity Workbench, SQL Developer, SQL Plus, iNav

Thanks to Linda the DBA for suggested tools mentioned in this section.

Interfacing Modeling Tools

Building on top of the Data Modeling paradigm is Interface Modeling. This discipline assumes a top-down API Development approach in which the interface is defined and mostly finalized before development activities commence. Interface Modeling creates the API interface definition (Swagger or similar). It utilizes JSON or XML models that are developed by Data Modelers. Again, there is likely a higher-level abstraction language used to create the interface definition such as UML.

Options: IBM Rational, Swagger Editor, Apigee 127

Registry and Repository (APIs and Services)

Many will likely remember this concept from the SOA days — a Services Registry and Repository. I suppose we could call it an API Registry and Repository now, but let’s not get carried away. For many enterprises, the Registry and Repository concept is still present; there will be a desire or assumption that it will serve as the source-of-truth for API interfaces and API meta-data. If there is no legacy Registry and Repository, then the Developer Portal would likely fill this role.

Options: WebSphere Services Registry and Repository (WSRR), Akana (formerly SOA Software) Lifecycle Manager for APIs, any content management system and a lot of discipline.